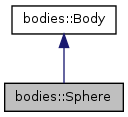

Definition of a sphere. More...

#include <bodies.h>

Public Member Functions | |

| virtual BodyPtr | cloneAt (const Eigen::Affine3d &pose, double padding, double scale) const |

| Get a clone of this body, but one that is located at the pose pose and has possibly different passing and scaling: padding and scaling. This function is useful to implement thread safety, when bodies need to be moved around. | |

| virtual void | computeBoundingCylinder (BoundingCylinder &cylinder) const |

| Compute the bounding cylinder for the body, in its current pose. Scaling and padding are accounted for. | |

| virtual void | computeBoundingSphere (BoundingSphere &sphere) const |

| Compute the bounding radius for the body, in its current pose. Scaling and padding are accounted for. | |

| virtual double | computeVolume () const |

| Compute the volume of the body. This method includes changes induced by scaling and padding. | |

| virtual bool | containsPoint (const Eigen::Vector3d &p, bool verbose=false) const |

| Check if a point is inside the body. | |

| virtual std::vector< double > | getDimensions () const |

| Get the radius of the sphere. | |

| virtual bool | intersectsRay (const Eigen::Vector3d &origin, const Eigen::Vector3d &dir, EigenSTL::vector_Vector3d *intersections=NULL, unsigned int count=0) const |

| Check if a ray intersects the body, and find the set of intersections, in order, along the ray. A maximum number of intersections can be specified as well. If that number is 0, all intersections are returned. | |

| virtual bool | samplePointInside (random_numbers::RandomNumberGenerator &rng, unsigned int max_attempts, Eigen::Vector3d &result) |

| Sample a point that is included in the body using a given random number generator. Sometimes multiple attempts need to be generated; the function terminates with failure (returns false) after max_attempts attempts. If the call is successful (returns true) the point is written to result. | |

| Sphere () | |

| Sphere (const shapes::Shape *shape) | |

| virtual | ~Sphere () |

Protected Member Functions | |

| virtual void | updateInternalData () |

| This function is called every time a change to the body is made, so that intermediate values stored for efficiency reasons are kept up to date. | |

| virtual void | useDimensions (const shapes::Shape *shape) |

| Depending on the shape, this function copies the relevant data to the body. | |

Protected Attributes | |

| Eigen::Vector3d | center_ |

| double | radius2_ |

| double | radius_ |

| double | radiusU_ |

Detailed Description

Constructor & Destructor Documentation

| bodies::Sphere::Sphere | ( | ) | [inline] |

| bodies::Sphere::Sphere | ( | const shapes::Shape * | shape | ) | [inline] |

| virtual bodies::Sphere::~Sphere | ( | ) | [inline, virtual] |

Member Function Documentation

| boost::shared_ptr< bodies::Body > bodies::Sphere::cloneAt | ( | const Eigen::Affine3d & | pose, |

| double | padding, | ||

| double | scaling | ||

| ) | const [virtual] |

Get a clone of this body, but one that is located at the pose pose and has possibly different passing and scaling: padding and scaling. This function is useful to implement thread safety, when bodies need to be moved around.

Implements bodies::Body.

Definition at line 152 of file bodies.cpp.

| void bodies::Sphere::computeBoundingCylinder | ( | BoundingCylinder & | cylinder | ) | const [virtual] |

Compute the bounding cylinder for the body, in its current pose. Scaling and padding are accounted for.

Implements bodies::Body.

Definition at line 174 of file bodies.cpp.

| void bodies::Sphere::computeBoundingSphere | ( | BoundingSphere & | sphere | ) | const [virtual] |

Compute the bounding radius for the body, in its current pose. Scaling and padding are accounted for.

Implements bodies::Body.

Definition at line 168 of file bodies.cpp.

| double bodies::Sphere::computeVolume | ( | ) | const [virtual] |

Compute the volume of the body. This method includes changes induced by scaling and padding.

Implements bodies::Body.

Definition at line 163 of file bodies.cpp.

| bool bodies::Sphere::containsPoint | ( | const Eigen::Vector3d & | p, |

| bool | verbose = false |

||

| ) | const [virtual] |

Check if a point is inside the body.

Implements bodies::Body.

Definition at line 129 of file bodies.cpp.

| std::vector< double > bodies::Sphere::getDimensions | ( | ) | const [virtual] |

| bool bodies::Sphere::intersectsRay | ( | const Eigen::Vector3d & | origin, |

| const Eigen::Vector3d & | dir, | ||

| EigenSTL::vector_Vector3d * | intersections = NULL, |

||

| unsigned int | count = 0 |

||

| ) | const [virtual] |

Check if a ray intersects the body, and find the set of intersections, in order, along the ray. A maximum number of intersections can be specified as well. If that number is 0, all intersections are returned.

Implements bodies::Body.

Definition at line 202 of file bodies.cpp.

| bool bodies::Sphere::samplePointInside | ( | random_numbers::RandomNumberGenerator & | rng, |

| unsigned int | max_attempts, | ||

| Eigen::Vector3d & | result | ||

| ) | [virtual] |

Sample a point that is included in the body using a given random number generator. Sometimes multiple attempts need to be generated; the function terminates with failure (returns false) after max_attempts attempts. If the call is successful (returns true) the point is written to result.

Reimplemented from bodies::Body.

Definition at line 182 of file bodies.cpp.

| void bodies::Sphere::updateInternalData | ( | ) | [protected, virtual] |

This function is called every time a change to the body is made, so that intermediate values stored for efficiency reasons are kept up to date.

Implements bodies::Body.

Definition at line 145 of file bodies.cpp.

| void bodies::Sphere::useDimensions | ( | const shapes::Shape * | shape | ) | [protected, virtual] |

Depending on the shape, this function copies the relevant data to the body.

Implements bodies::Body.

Definition at line 134 of file bodies.cpp.

Member Data Documentation

Eigen::Vector3d bodies::Sphere::center_ [protected] |

double bodies::Sphere::radius2_ [protected] |

double bodies::Sphere::radius_ [protected] |

double bodies::Sphere::radiusU_ [protected] |

The documentation for this class was generated from the following files: